Analysis

Find more defects

Prevent inandvertently untested changes

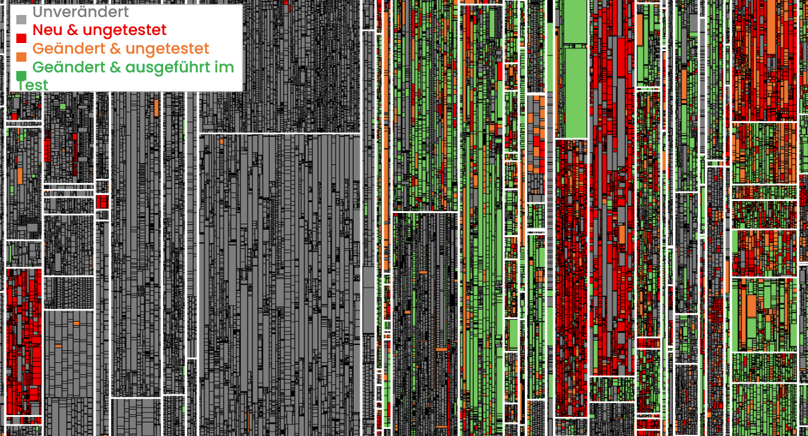

Defects, Changes and Tests

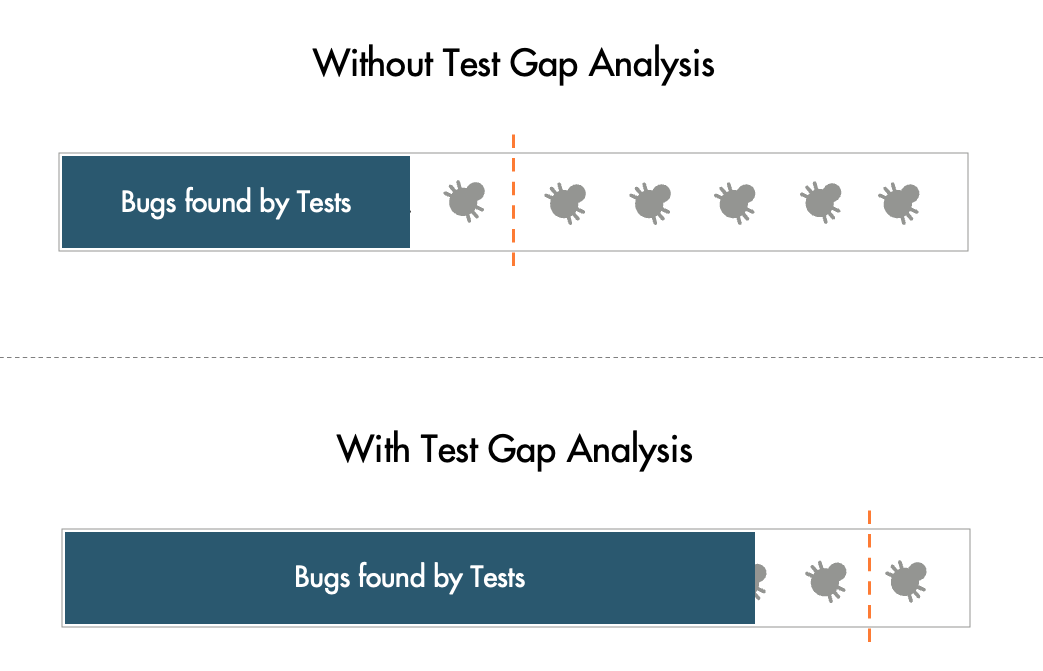

In a study with Munich Re, we accompanied two releases of a business information system. In both releases over 50% of the changes were not tested. These Test Gaps slipped through their very structured, multi-stage testing process unnoticed. And they turned out to have a 5x higher defect probability!

Test Gap Analysis

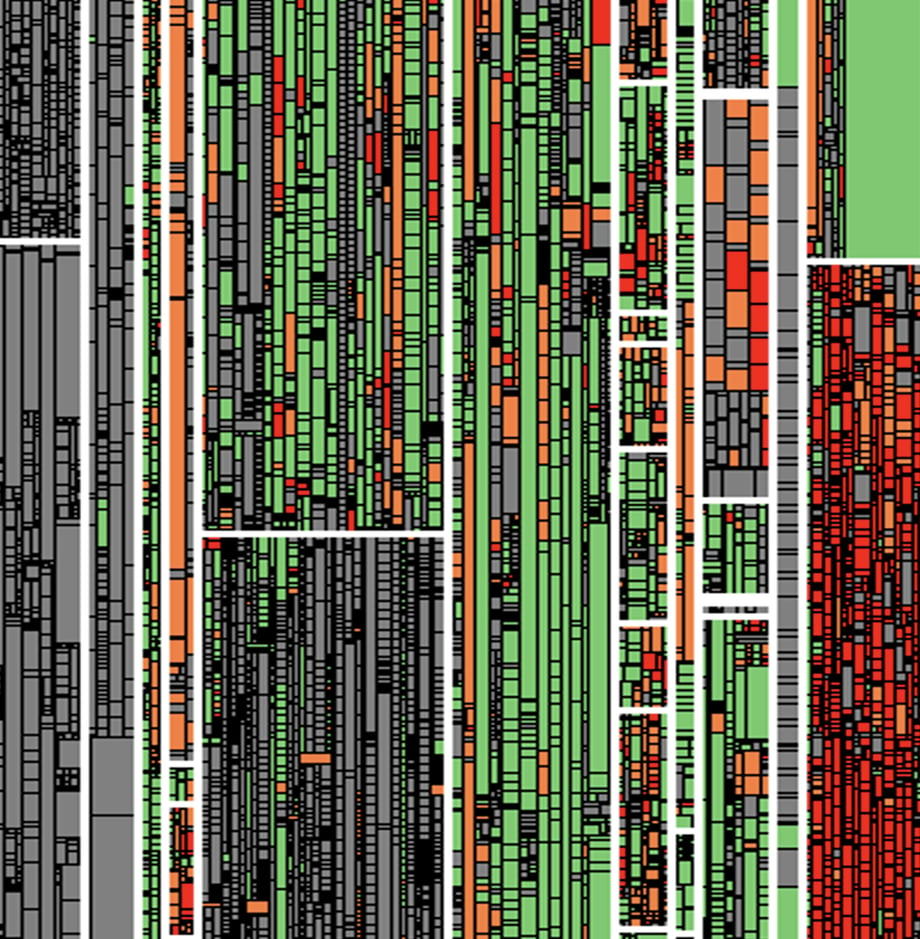

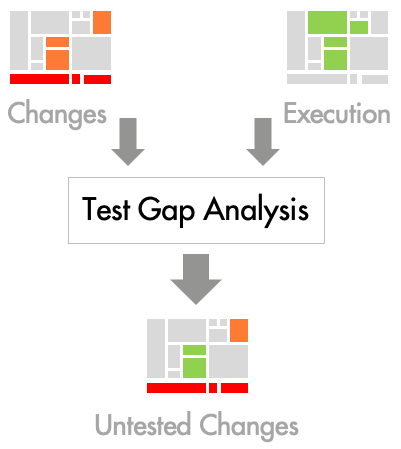

Teamscale’s Test Gap Analysis automatically collects all code changes from your version control system (e.g., Git or Subversion).

Coverage profilers record which code is executed in your test environments and send the data to Teamscale for analysis.

This works for all types of tests, even manual ones, as well as for all common technologies, platforms and programming languages.

Teamscale's Test Gap Analysis combines the data on code changes and code executed in tests, to show you all untested changes in your code.

Put it to Use

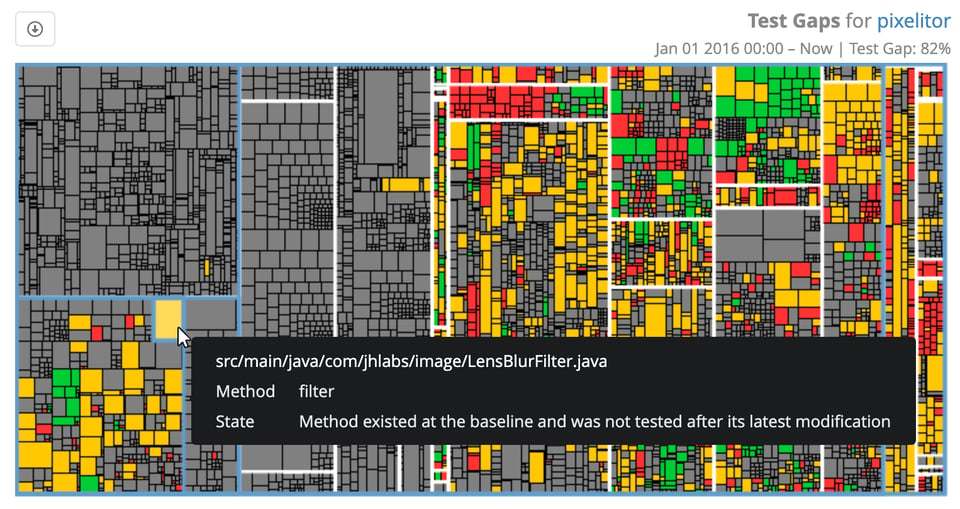

As a tester, you may focus on the Test Gaps on the functionality you're currently testing. You see which code has changed in this context and what has not been tested. Consider putting “no Test Gaps” on your Definition of Done!

As a test manager, you may monitor Test Gaps throughout the code base, for any time frame (e.g. since the latest release), to steer testing resources based on risks.

Test Gap Analysis shows you the gaps and who did the respective changes, to help you talk to the right people and come up with the right tests.

Types of Tests

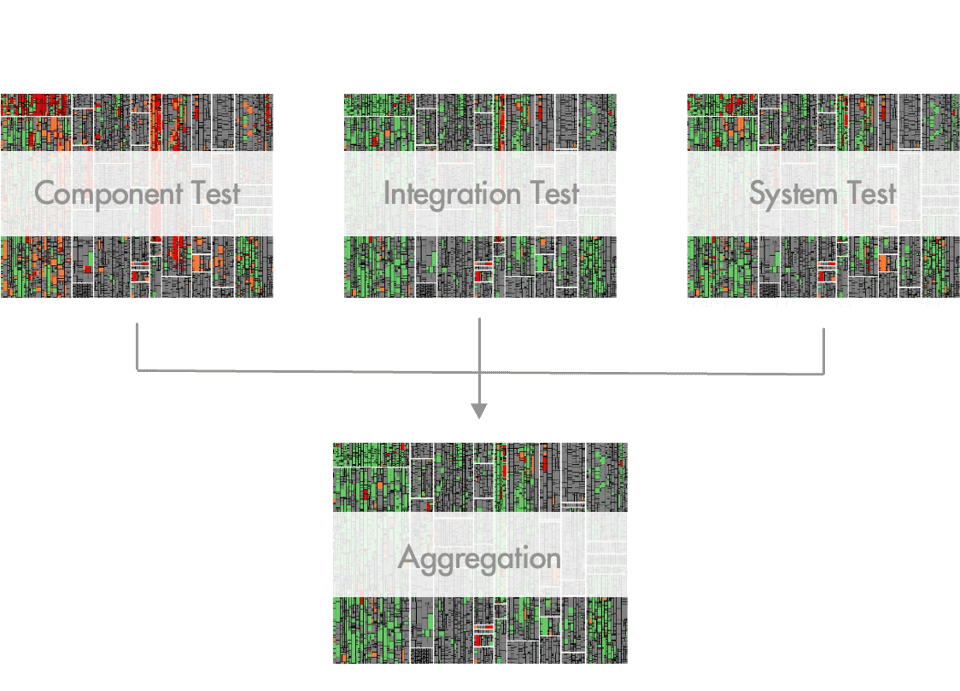

Test Gap Analysis may consider both automated and manual tests. The coverage profiler in your test environment is independent of what or who drives the tests.

Test Gap Analysis may consider tests on all test stages, from low-level unit tests in the CI to E2E acceptance test in a pre-live environment. All you need is a coverage profiler in the retrospective environment.

Test Gap Analysis is applicable to all technologies, platforms and programming languages, for which a coverage profiler exists - which is usually the case.

Experience Less Defects

Test Gap Analysis helps you effectively prevent defects from slipping into production.

Munich Re, for example, cut the number of production defects in half, thanks to Test Gap Analysis.

Test Gap Analysis helps you prevent that untested changes ever go into production without you knowing. It is a powerful tool, to replace your testers’ gut feeling by facts everyone shares.

Munich Re, for example, reduced the their Test Gap from more than 50% to below 10%, since they started using Test Gap Analysis.

Test Gap Analysis enables you to make risk-based decisions on where to invest your precious testing resources. Identify high-stakes gaps and systematic leaks to do the best job you can.

FAQs

Everything you need to know about Test Gap Analysis. Can’t find the answer you’re looking for? Please chat to our friendly team.

Most defects occur in new or changed code. Untested changes have a 5x times higher likelihood of still containing a defect.

The reason usually is that information about changes is spread across different systems, teams and individuals. In the face of such incomplete information, no one holds the complete big picture.

We say some code (a method, function, line, branch, ...) is covered, when it is executed by a test. Note that this is different from feature coverage, which does not consider the actual code.

A coverage profiler is a tool that attaches to your software system, to record which part of the code gets executed during runtime. There are quite different technical approaches to this, depending on the technology.

Teamscale's Test Gap Analysis automatically updates whenever new changes are made or additional tests are run. You may concentrate on developing your product and use the Test Gaps to steer your testing efforts.

No. Test Gap Analysis tells you where you missed things in your testing. Like with any testing, it cannot guarantee the absence of defects. Nor does it assess the quality of your tests. You still need good people doing a good job testing!

No. Closing all Test Gaps should never be a goal in itself. Use the Test Gaps for informed decisions about whether you need additional tests and what for. And don't let "perfect" be the enemy of "good enough".

Would you like to exchange experiences on Test Gap Analysis?

Any complex analysis raises questions. Is it applicable to you at all? What experiences have other companies in your industry had with Test Gap Analysis? Are the technologies you use supported? To name just a few.

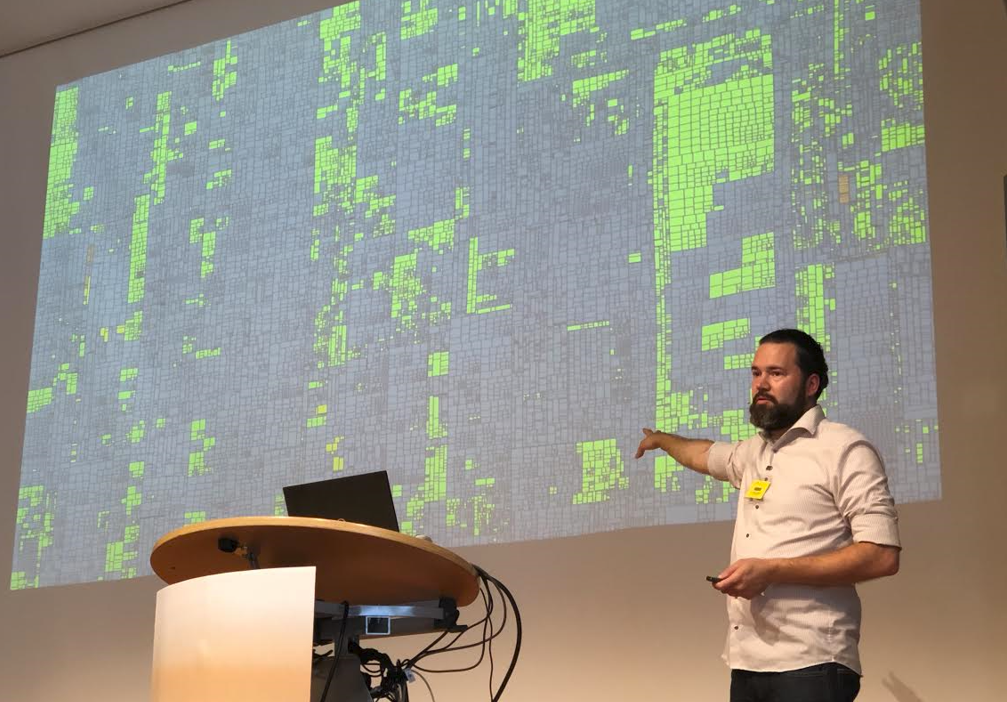

I have dealt with Test Gap Analysis for over 10 years. In research papers, speaking at industry conferences, talking to testers and test managers, and working with customers who have been using it for years.

I’m happy to chat with you about Test Gap Analysis!

Companies that use Teamscale

Teamscale supports and integrates many other tools and formats.

Don't miss your chance to deliver better software with Teamscale

Latest writings

The latest news, events and insights from our team.

- Events

- Publications

- Cases

- Blog

.svg.png?width=65&height=65&name=BMW_logo_(gray).svg.png)