-

Blog

Blog

-

Best Practices for Introducing Test Gap Analysis

Best Practices for Introducing Test Gap Analysis

Test Gap Analysis identifies changed code that was never tested before a release. Often, these code areas—the so called test gaps—are way more error prone than the rest of the system, which is why test managers typically try to test them most thoroughly.

We introduced Test Gap Analysis in many projects and used it on a wide range of different projects: from enterprise information systems to embedded software, from C/C++ to Java, C#, Python and even ABAP.

We thereby learnt a lot about factors that affect the complexity of the introduction. In this post, I want to highlight a few important factors, which I think are good to know before starting with Test Gap Analysis.

Integration Aspects

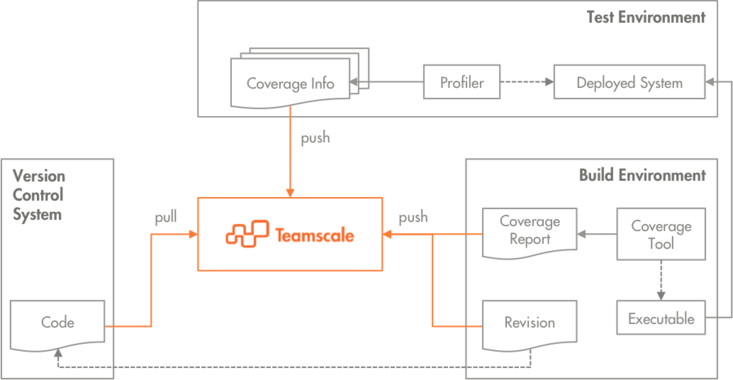

Test Gap Analysis combines static analysis for change detection with dynamic analysis to identify which parts of the system have been executed by tests. To collect the necessary data, Teamscale—the tool that implements Test Gap Analysis—has to be integrated in the development and test infrastructure. The following diagram provides an abstract overview of this integration.

As shown in the diagram, Teamscale connects directly to the version control system, from where it pulls and analyzes the changes.

One advantage of Test Gap Analysis is that it works for both automated and manual tests, and even allows to provide an aggregated view of the test state.

Coverage from automated tests, such as unit tests, is often generated by coverage tools in the build. As depicted, the generated coverage reports are uploaded to Teamscale, together with information about the revision that has been built and tested.

To perform manual tests, a version of the system is built and deployed onto a test environment. Then, a profiler traces the parts of the system that are executed while the tests are being performed. As shown in the diagram, this coverage information is uploaded to Teamscale, again specifying, which version of the system was tested.

"Which interfaces are used for integration?"

Teamscale can be used with several version control systems and is able to process the common coverage report formats for the programming languages it supports out of the box. Profilers for manual testing typically also provide some sort of coverage report. The code revision of the tested system has to be specified using a timestamp.

"Is there a profiler for my technology?"

Without knowing your technology: there probably is—either open source or commercial. We introduced Test Gap Analysis for many customers with mainstream technologies such as Java, C/C++, .NET (C# or Visual Basic), Python, or SAP ABAP. But also for less obvious technologies such as databases or embedded systems there are often tools which profile on a level that is sufficient for Test Gap Analysis. Typically, virtual machines (e.g., Java, C#, ABAP) facilitate the collection of test coverage data. But even for embedded systems it is often possible to collect test coverage—for example by using hardware tracing interfaces.

"What to do if I have source maps?"

Some compilers (e.g. .NET) create executables that do not contain information about the source code they were built from. To interpret coverage information for such executables, so called source maps or symbol files are required, which store additional debug information. In .NET for instance, these are enclosed in the .pdb files that are generated during the build. Teamscale interprets .pdb files and is thus able to calculate coverage on the source code.

System Architecture

The system architecture has an impact on where coverage information has to be collected in manual tests. The easiest scenario is a server application, where coverage must only be collected on the server. The more environments have to be considered, the more complex is the setup needed to collect the coverage data. For instance, coverage for fat-client applications has to be collected on all clients.

We have never encountered any setup where Test Gap Analysis was not applicable. However, we strongly suggest to start with an easy setup for the first integration to get results quickly. Then, more complex scenarios can be approached gradually.

Testing Process

The goal of Test Gap Analysis is to provide an exhaustive view on the test state of the analyzed system. Overall, clearly defined test phases facilitate planning and monitoring, as they help to make sure that all relevant test activities are covered.

For productive use, all test environments where manual testing happens should be integrated. Hence, their number has a direct impact on the complexity of the overall setup. Some of our customers even spin up virtual clients as test environments on the fly. In such scenarios, we make sure that profilers are automatically installed and coverage is collected at the right time. However, to get started with Test Gap Analysis, we suggest a scenario with only one or few controlled environments.

Summary

If there was only one advice I could give you for the integration of Test Gap Analysis, it would be: start small.

Test Gap Analysis combines information from several environments and tools, so there are potentially many factors—even beyond the ones covered in this blog post—that affect the complexity of the technical integration.

Our experiences show that Test Gap Analysis should always be introduced step by step. Many of our customers started with a single system and expanded afterwards. Good rules of thumb for a successful evaluation are:

- Start with a small, controlled system or even parts of a system.

- Do not start with the most difficult technology.

- Choose a system whose team is eager to try new approaches.

- Strive for a quick proof of concept.

- Let us help.

Let me underline the last point as a closing remark: We are happy to help integrating Test Gap Analysis in your environments and introducing it to your teams. We have done this many times, for many systems and technologies already, so we are confident to be able to deal with your specific case. Feel free to contact us.

Related Posts

Our latest related blog posts.