-

Blog

Blog

-

A New Perspective on Tests

A New Perspective on Tests

Software is made of code. Well, yes, but not exclusively. Software engineering involves working with many other artifacts, such as models, architectures, tickets, build scripts, … and tests.

The goal of Teamscale is to provide meaningful and useful information about all aspects of software engineering. This is what we call »Software Intelligence«.

Consequently, in addition to providing profound insights into code quality, Teamscale performs many other sophisticated analyses, including architecture conformance analysis, analysis of issue tracker data, team evolution analysis, code ownership analysis, or data taint security analysis.

Moreover, Teamscale contains a bunch of analyses for test quality, such as Test Gap Analysis and analysis of Ticket Coverage. With version 3.9, we added a new home for questions related to tests in Teamscale’s UI, which we call the »Tests« perspective. In the following, I’ll briefly go over the two main features you can find there and give an outlook of what’s on the roadmap.

Test Gap Analysis

Test Gap Analysis identifies changed code that was never tested before a release. Often, these code areas—the so called »test gaps«—are way more error prone than the rest of the system, which is why test managers typically try to test them most thoroughly.

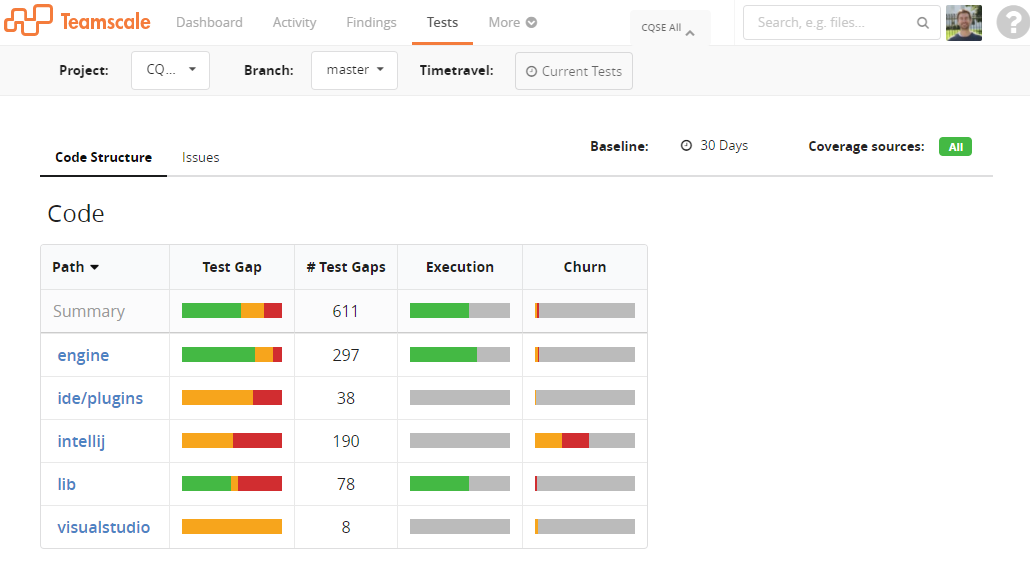

Teamscale continuously searches for test gaps based on all changes to the code and all test execution information available. The results are now shown on the Tests perspective:

As depicted in the figure above, the Tests perspective shows several metrics about test gaps for every folder in the system:

- an indicator for the ratio of tested vs untested changes (»Test Gap«)

- the number of untested changes (»# Test Gaps«)

- the portion of the code that has been executed by tests (»Execution«)

- the portion of the code that has been changed (»Churn«)

Red depicts new code, orange shows the portion of code that has been changed, and green corresponds to code that has been executed.

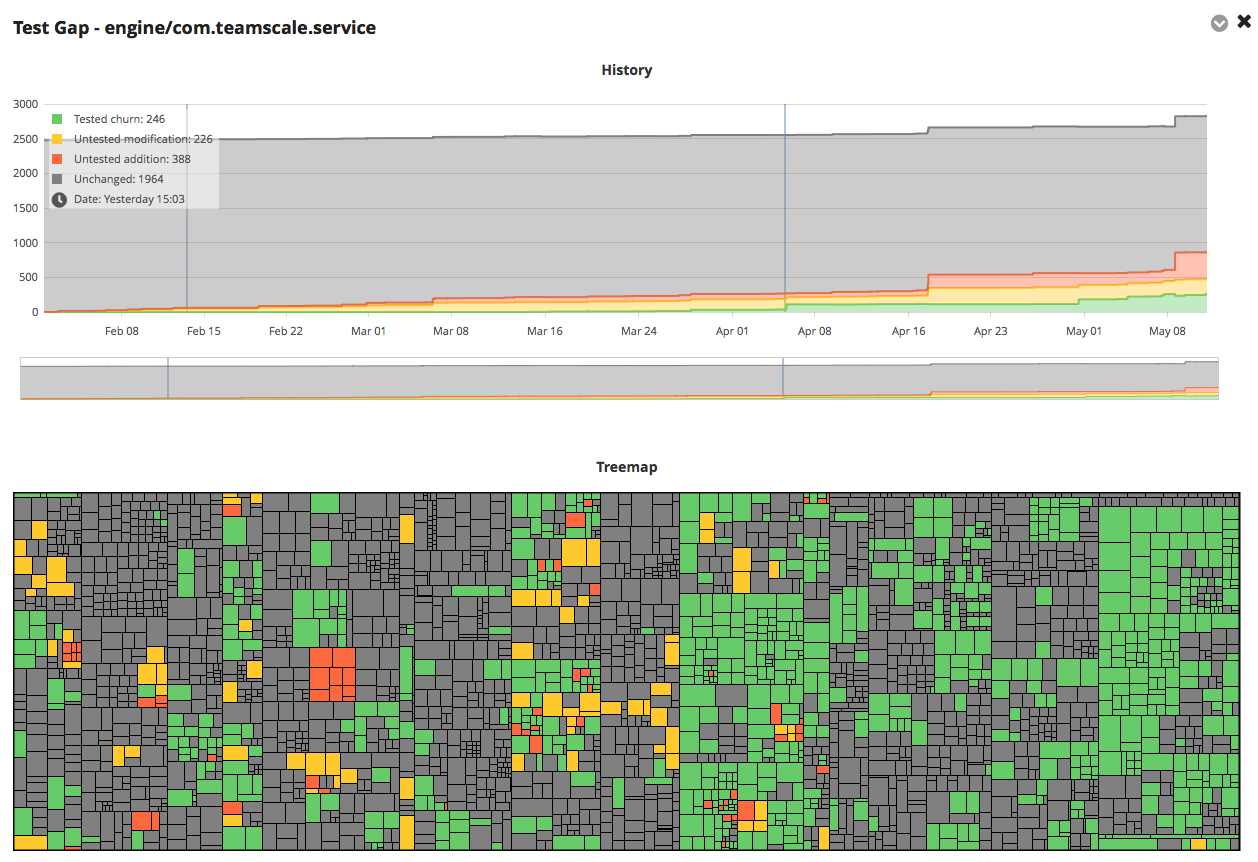

This information is based on the baseline and coverage sources selected at the top. Drilling down to subfolders is a matter of clicking the corresponding link. Clicking on one of the table cells brings up a more detailed view of the underlying data:

The trend chart at the top depicts how the portion of added, changed, and tested code develops over time. This helps to understand if development and testing efforts are balanced.

The treemap highlights the concrete test gaps in the source code of the system: Each rectangle corresponds to a single method in the code—the bigger the rectangle, the longer the method in lines of code. Red and orange rectangles are untested changes (»test gaps«), i.e. the methods that are most error-prone on average. Hovering over a rectangle reveals the name of the underlying method, a click takes you directly to the source code.

Test managers frequently work with this system-wide test gap overview to get hold of the overall status. Testers, however, often need a more specific view for their daily work, which I cover in the next section.

Ticket Coverage

To ensure that no critical functionality is left untested, testers continuously have to answer the question »Where are still relevant gaps in the tests I am supposed to perform?«

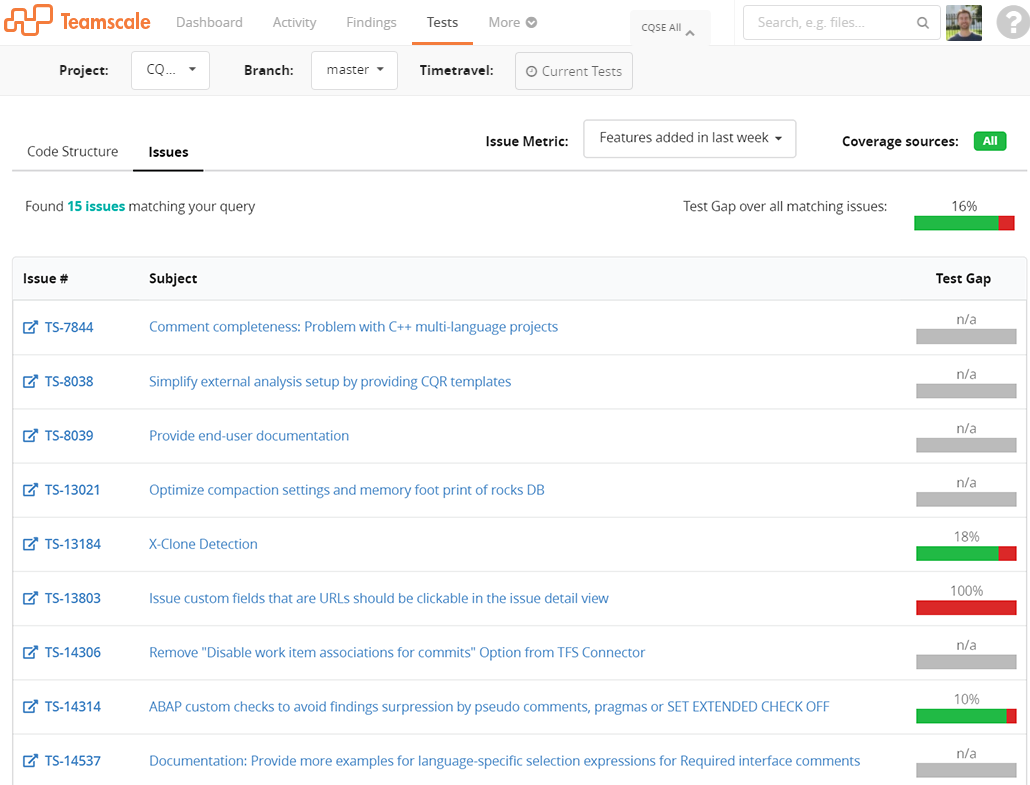

Ticket Coverage is a specialization of the Test Gap Analysis that allows us to determine for a single ticket (i.e. bug report, user story, issue, feature request etc.), which changes have not been sufficiently tested. This gives testers a better understanding of what their tests missed:

As depicted in the figure above, for every ticket matching a given query, the Tests perspective shows the test gap percentage together with general information such as the subject and the ticket number. That way, testers can see at a glance, if specific tickets were not sufficiently tested.

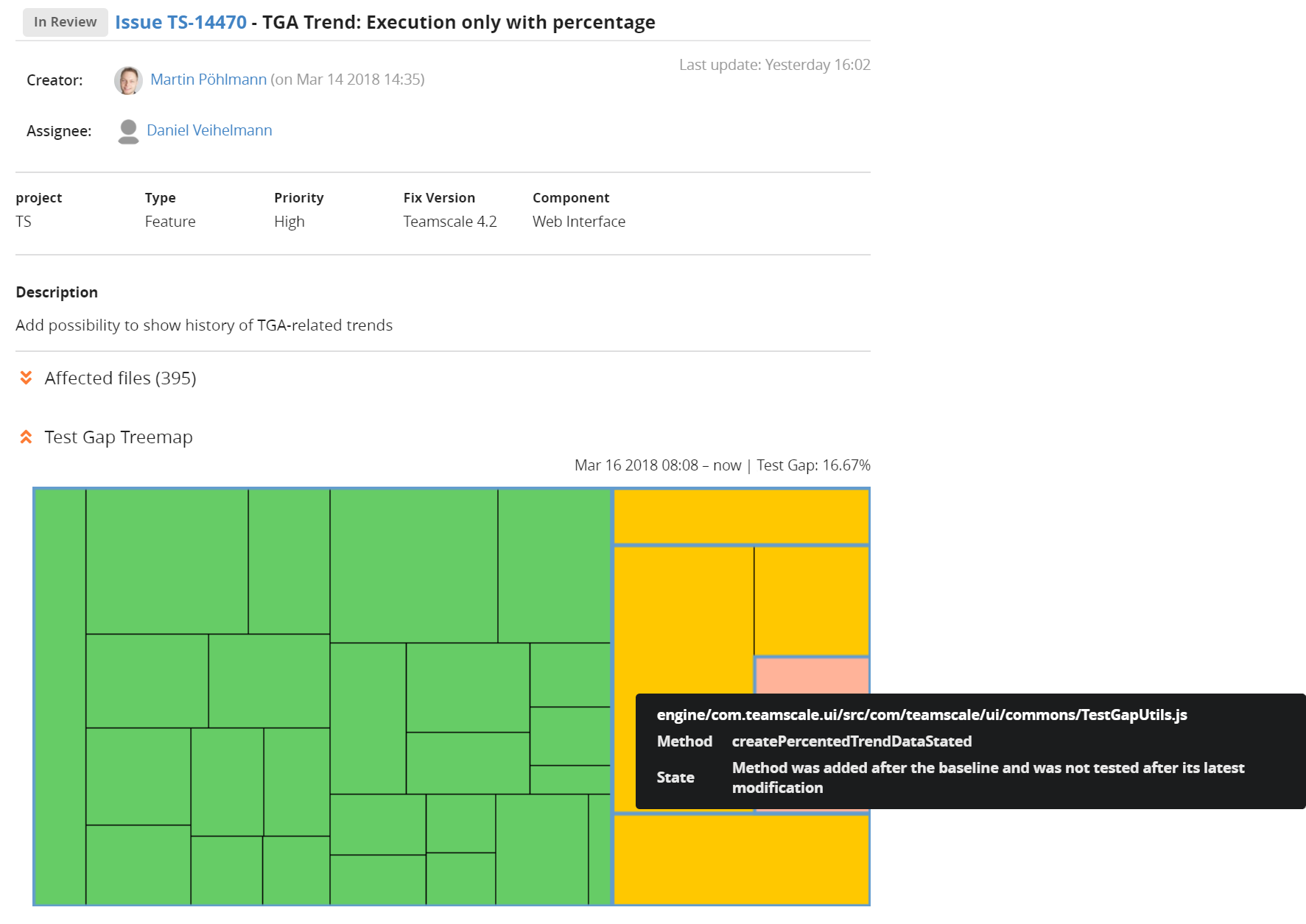

Clicking on one of the tickets brings up a more detailed view of the underlying data, including a treemap that visualizes, which part of the code that was changed in the course of the ticket, was actually tested:

Future Work

Tests are becoming first class citizens in Teamscale. We will implement more and more functionality that helps to deal with automated and manual tests in the future. I want to highlight two features we already have on the roadmap, which you will most likely find on the Tests perspective in one of our future releases:

Test Impact Analysis

While automated test suites have tremendous benefits, more and more companies »suffer« from their test suites being too large to be executed frequently, say, with every commit. In some cases, executing the whole test suite even takes multiple days.

Test Impact Analysis tries to order the test suite in an intelligent way, such that tests which are most likely to find bugs, are executed first.

Integration of Test Results

With every execution of automated tests, a lot of data is produced, which might help to understand certain effects and hunt down problems. Amongst them are test results, i.e. which tests did pass, fail, or were ignored, test run time, or which code fragments are covered by which tests? In future releases, we plan to process and integrate more and more of such results in the Tests perspective.

Related Posts

Our latest related blog posts.