-

Blog

Blog

-

Bridge your Test Gaps with Teamscale

Bridge your Test Gaps with Teamscale

Many companies employ sophisticated testing processes, but still bugs find their way into production. Often they hide among the subset of changes that were not tested. Actually, we found that untested changes are five times more error prone than the rest of the system.

To avoid that untested changes go into production, we came up with Test Gap analysis—an automated way of identifying changed code that was never executed before a release. We call these areas test gaps. Knowing them allows test managers to make a conscious decision, which test gaps have to be tested before shipping and which ones are of low risk and can be left untested.

A short while ago, we introduced Test Gap analysis into our code quality software Teamscale. In the following, I want to give you a quick overview of this feature, and show you how to get things started. To get a more in-depth overview of Test Gap analysis first, refer to my colleague Fabian’s post. To see Test Gap analysis in action, have a look at our workshop.

Prerequisites

Obviously, you first need Teamscale. If you don’t have a copy yet, go ahead and get your free trial here.

To use Test Gap analysis, your project should be connected to your source code repository, and you should upload test coverage regularly.

Please refer to the getting started guide for information on setting up projects in Teamscale. Information on uploading test coverage can be found here. Contact us if you need further assistance.

Test Gap Analysis in action

Test Gap analysis is helpful in several scenarios. When developing new features, it helps to make sure that new code and changes made on the way have been tested. Similarly, Test Gap analysis helps to make sure that hotfixes are tested before shipping. In the remainder of this post, I want to show you how to find test gaps in your newly developed features.

Which changes are considered?

Test Gap analysis works on changes. To define the right time span to use for Test Gap analysis, we set a baseline and an end date. Baseline refers to the date from which on to investigate code changes, while end date marks the other end of the time frame. All changes that were made to the system between baseline and end date are considered in the Test Gap analysis.

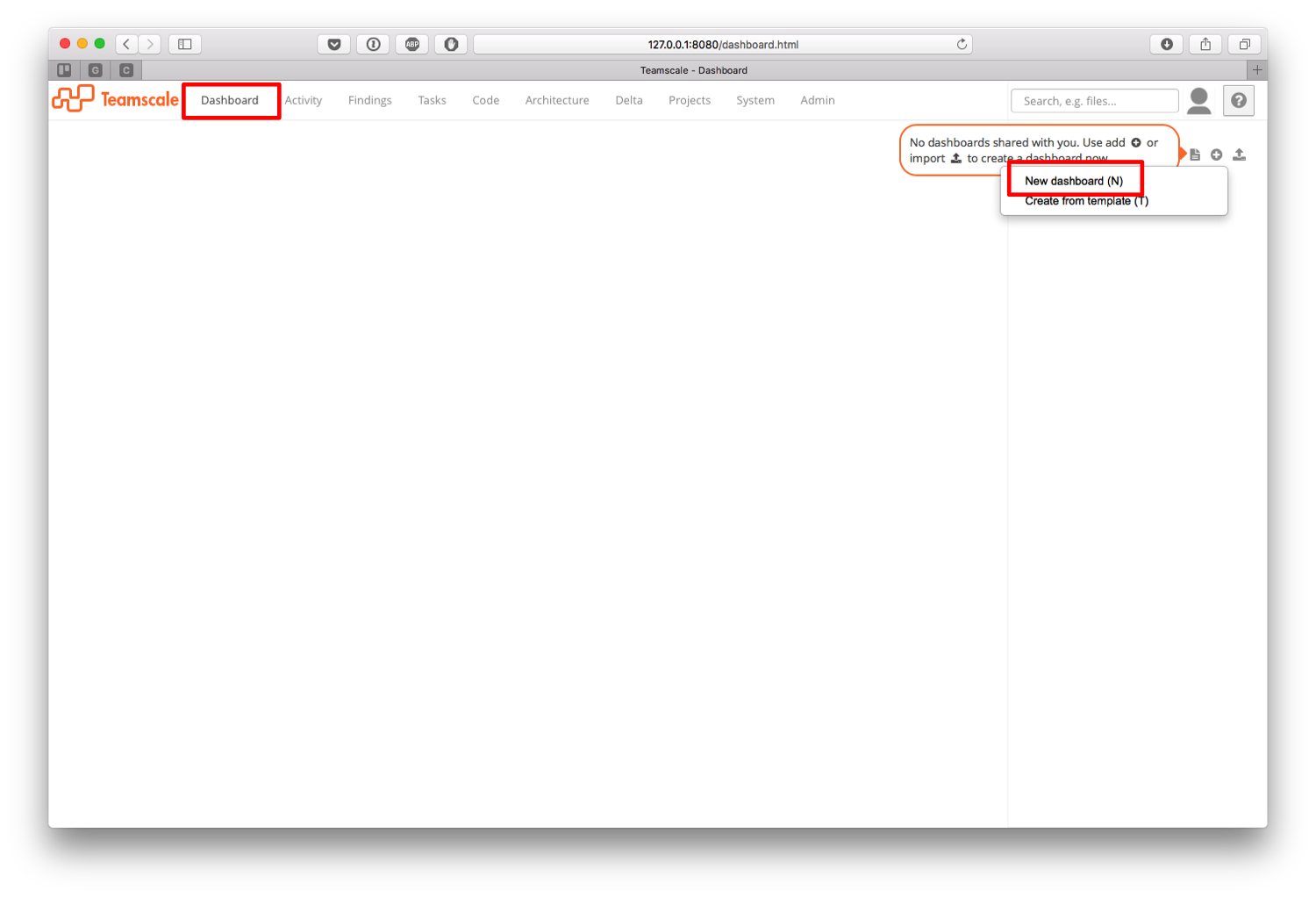

Create a dashboard

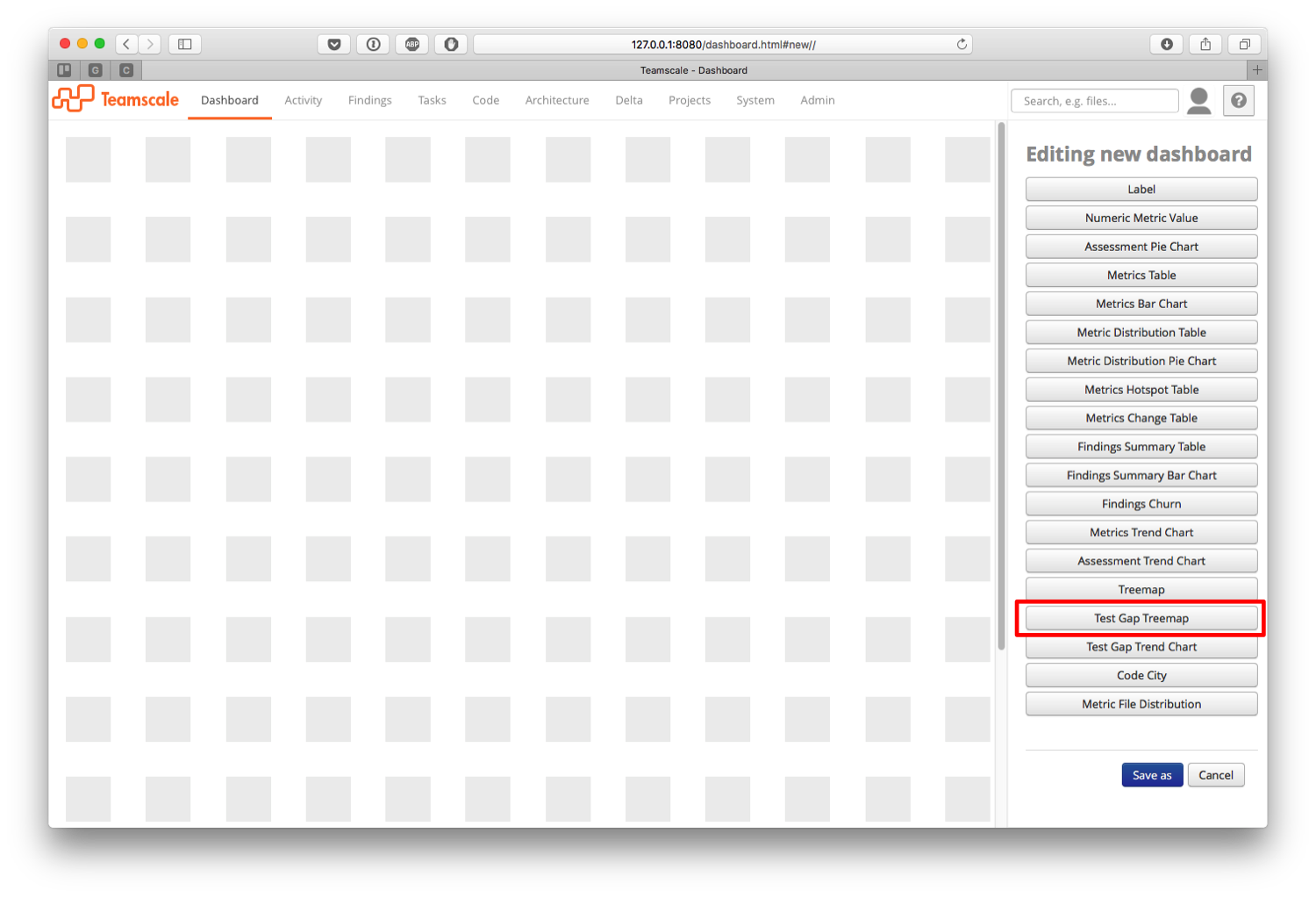

In Teamscale, we use dashboards to visualize test gap related information. To create one, switch to the Dashboard perspective, click the plus icon, and select New dashboard.

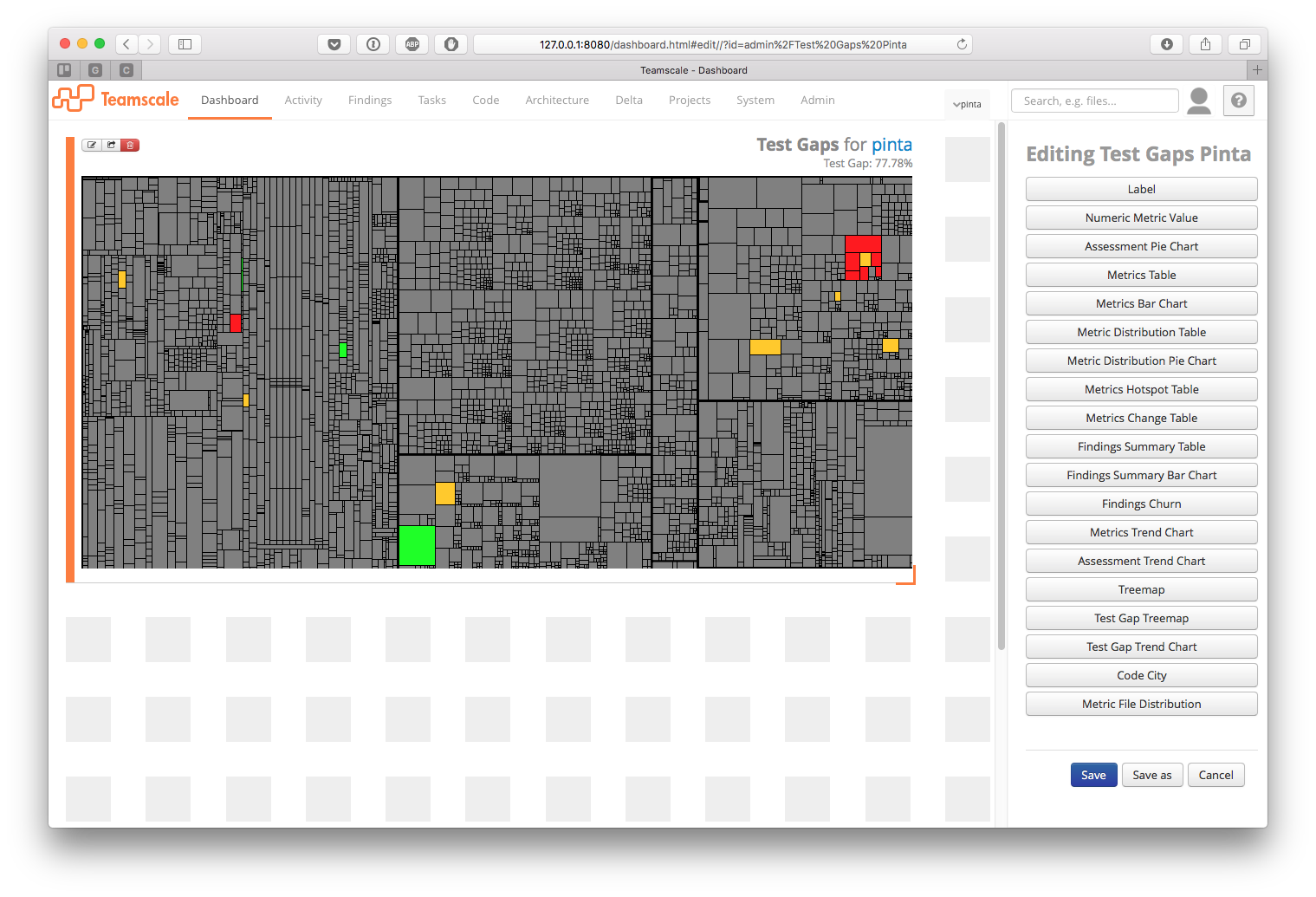

On the canvas that shows up next, you can place widgets, which visualize different types of information. In our case, we add a new Test Gap Treemap.

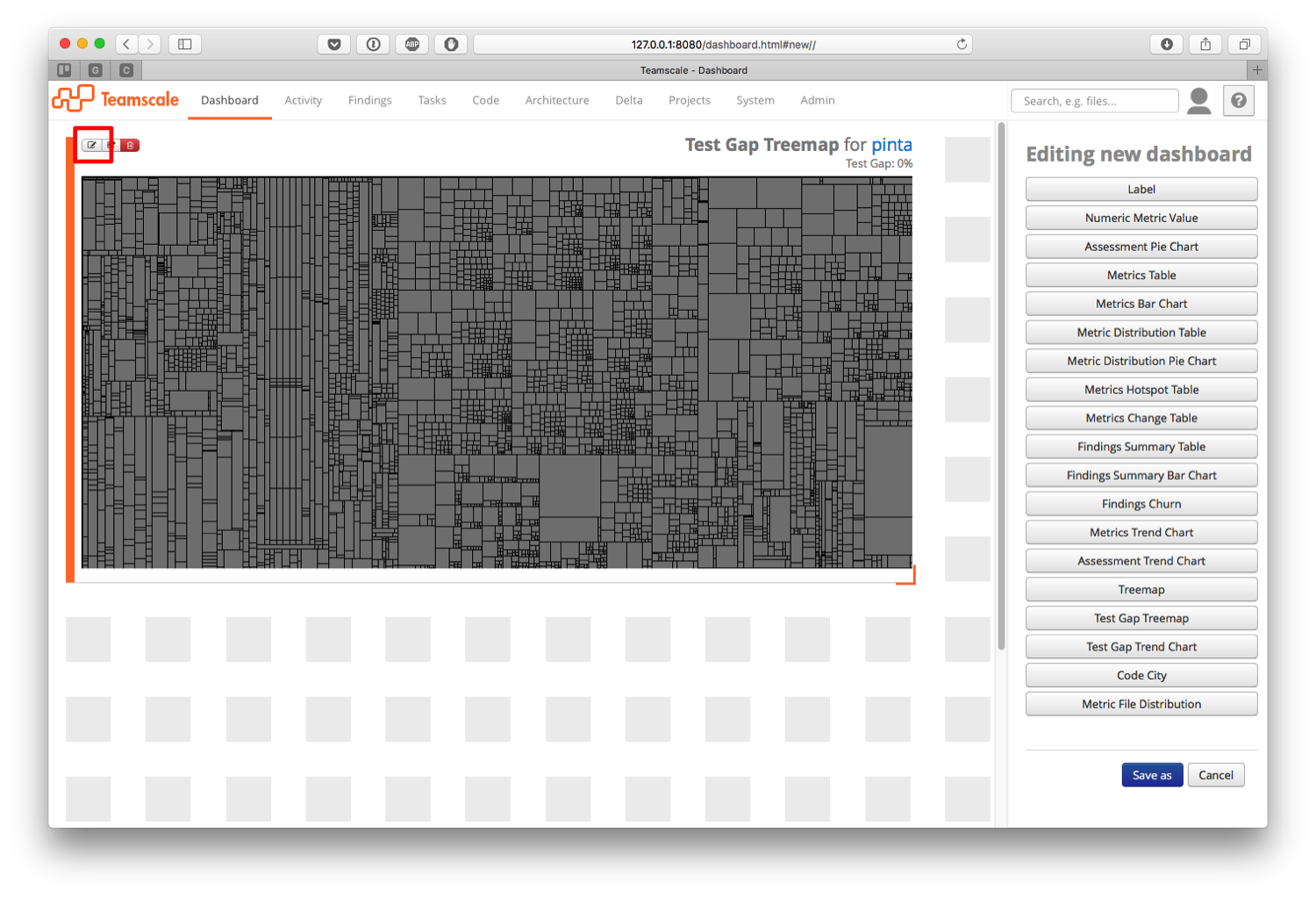

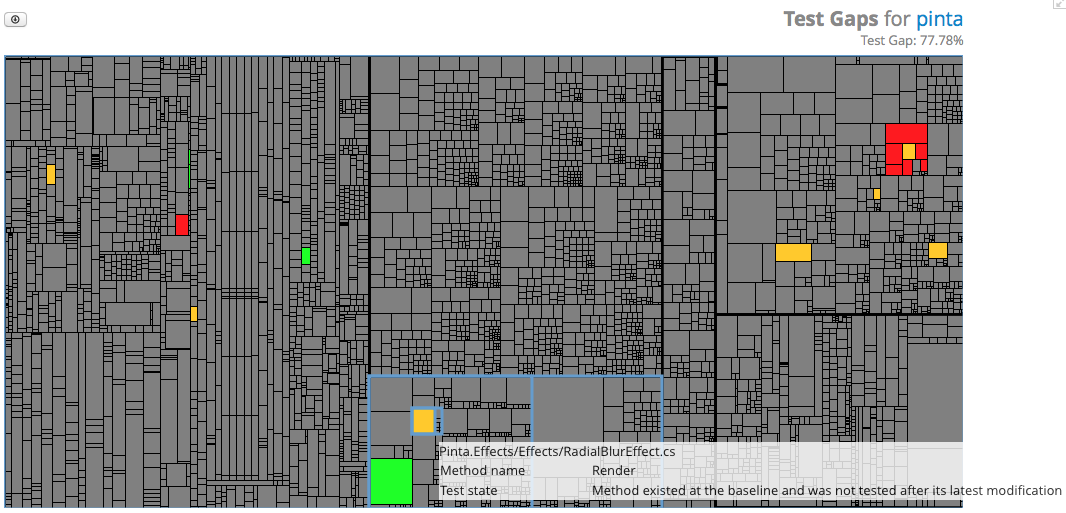

You will see a treemap—a graphical representation of the source code of your project. Every rectangle on this treemap corresponds to a method in the source code, the bigger the rectangle, the longer the method.

Go ahead and resize the widget by dragging its lower right corner until it fits your needs. Then hit the edit button at the upper left corner to configure it.

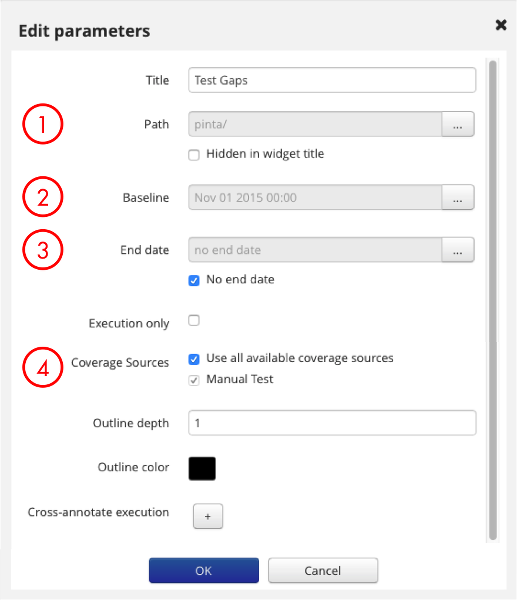

On the configuration dialog, there are several settings to play around with. I’ll walk you through the four most important.

- Path refers to the part of your project you want to see on the Test Gap treemap. You might want to select your whole project, but you can also choose a smaller subset, say the component you are responsible for. The treemap will then zoom into that area.

- Baseline refers to the date from which on to consider changes, as described above. Choose a date slightly before the first change that is relevant to you to ensure that no changes are lost.

- End date marks the other end of the time frame. The No end date option ensures that also future changes will be considered.

- Coverage Sources are logical containers for different kinds of coverage, e.g. »Manual Test« or »Unit Test« or »Test Server 3«. Whenever uploading coverage to Teamscale, a coverage source has to be specified. Here you can select the coverage you want to consider in the Test Gap analysis by simply ticking the corresponding check box, or selecting all coverage sources.

Hit OK to apply your changes and see the results.

You can go back and edit the widget any time you like. Now go ahead and save the dashboard.

What do the colors mean?

Gray rectangles are methods that were not changed since the baseline you just entered. In contrast, orange methods were changed, and red methods were added since the baseline. If a method is depicted green, it was also executed since its last modification.

How do I work with a Test Gap treemap?

First of all, take your time to explore the treemap, and change its configuration until it fits your needs. When hovering over the treemap with your mouse, additional information about the method under the cursor will be shown, such as the file where it resides, and the method name.

Red and orange rectangles indicate that your tests are missing certain changes. If there are test gaps, like in the example shown above, you can now make a conscious decision, if the corresponding code should be tested or not. If you want to see the code behind a method, simply click on the rectangle. For instance, the method Render in the class RadialBlurEffect.cs is a test gap, because it was changed after the baseline, but not tested since its last modification. If you now test this method, and upload the coverage to Teamscale, the corresponding rectangle will become green, and you’ll have one test gap less.

Notice the percentage in the upper right corner of the treemap widget. This denotes the Test Gap ratio for the code shown on the treemap, i.e. the amount of modified or new methods covered by tests divided by the total amount of modified or new methods. In the example, there is a Test Gap of over 77%, meaning that over 77% of the modifications and additions shown on the treemap have not been tested in their current version.

It is also possible to download a CSV file containing all the methods and their corresponding test state, i.e. if the method is unchanged, was tested, or is a test gap. That way, you can make an in-depth inspection of the remaining test gaps. To this end, simply click on the download icon left above the treemap.

Conclusion

Test Gap analysis makes changes and testing activities more transparent, and thus helps you to avoid getting untested changes in production. With Teamscale you can visualize changes and testing activities with flexible graphical widgets to fit your specific needs. This helps you to synchronize development and testing over time and to bridge your remaining test gaps before the release by making a conscious and informed decision.

Want to learn more about Teamscale or try it yourself? Check out our free online demo or get your free trial from the Teamscale website.

Related Posts

Our latest related blog posts.